In teaching and learning, not all questions are created equal. Some questions have one objectively correct response, while others are subjective with an infinite number of correct responses.

Learning activities based around opinions, predictions, or metacognition all elicit very individual responses — and they’re absolutely important to include in a robust learning experience.

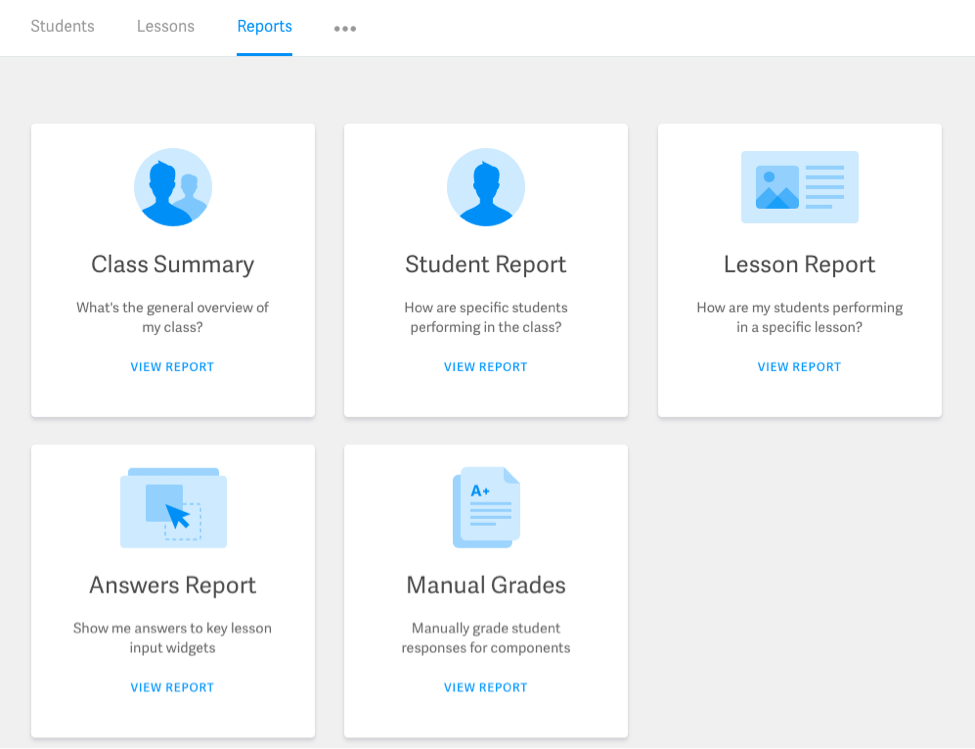

That’s why we introduced the Manual Grading Report to the Smart Sparrow Platform. Instructors can now elect to manually grade learner responses to any component, and then review and score each response individually through the platform.

Examples of when to use Manual Grading

There are many creative examples and reasons an instructor may wish to manually grade learner responses. For example…

- Writing prompts in particular require completely unique responses from learners. Grading requires human touch, as instructors think about grammar, organization of ideas, analysis, thoughtfulness, and overall quality of work.

- The scientific process requires scientists (or learners) to create hypotheses, make observations, and review their initial hypotheses based on gathered data. With manual grading, you can ask students to share their analyses at any point in the scientific process, and grade their responses based on completeness, depth, and use of gathered data to come to conclusions.

- If you’re a math instructor who prefers to grade based on process rather than solely results, ask students to share their complete step-by-step via the Expression Editor component or Rich Text Editor, and then enable manual grading to give full or partial credit.

Lesson scoring

Manual grading is only one way to score learner performance. For completion-based grading or objective responses, you can still have the Platform automatically score work. More information about scoring is detailed here.